5 Tips for Better Surveys That Yield Results

Software evaluations are a fact of life in the corporate world. They provide businesses with the information to make strategic business decisions, from expanding their software offerings to determining whether new products should be purchased or old ones upgraded. But, users often dismiss surveys due to poor design and vague (or no) instructions about how to respond. Follow these tips to create software evaluation surveys that yield results!

What Makes a Good Software Evaluation Survey?

We should start by asking, “what do you want to get out of the survey?” When going through a software or technology evaluation project, it’s important to talk not only to your project stakeholders but to the end-users as well. When end-users are engaged in the software selection or evaluation process – even if only asking about pain points and ideas for improvement – implementation and user adoption are vastly improved, and ROI increases.

How questions are phrased, and the types of answers requested can impact what you get out of the survey. Here are some tips on getting the most out of your surveys Here are 5 tips to help you create better software evaluation surveys that yield results!

1. Be Direct in Your Survey Questions for Software Requirements Gathering

You want to ask questions in such a way to get specific, actionable feedback. Your questions should be direct in order to elicit data you can act on (or insights in which to compare vendor capabilities).

Example

Question: How many hours per week do you spend on _______?

Answer: 0-1, 2-4, 5+

2. One Thing Per Survey Question

If a question has more than one thing to answer, your data will be skewed. Which part of the question have they answered, and how do you know? Before sending out your survey, check for double-barreled questions by looking for “and” or “or.”

3. Avoid Leading Survey Questions

Leading questions lead to bias, and bias leads to skewed data. It’s important to be neutral when phrasing questions. It may be helpful to have a colleague review the survey before sending it. Remember, you’re looking for information to help your company choose the best vendor/software to solve [insert your business reason here]. Leading questions to get specific answers will get you nowhere except wasted time and money.

4. Be Careful with your Answer Types

Do you want multi-checkbox, true/false, number scale or open-ended questions? It’s best to include several different types of answers. Getting more detailed as you ask questions can really help get to the actionable insight you’re looking for with the evaluation.

Open-ended questions give respondents a free text field in which to provide comments, thoughts, and ideas, but research shows that respondents are more likely to respond to closed-ended questions with answers from which to select. Providing an “other” option for them to answer is more widely answered over an open-ended question where they had to volunteer the answer themselves.

Any multiple choice question should give respondents an “other” answer option. This curbs the respondent from picking a random choice if none fit (and skewing data), and when they see “other,” they know they’ve got the option of providing their own answer.

5. Proving the ROI of Gleaning Insights Through Surveys for Software Requirements Gathering

Surveying end-users and using their feedback in your evaluation process will improve your time to adoption during implementation and beyond. Take some time to craft a survey that gives you actionable insights to use during your evaluation. Then, use that data to go back post-implementation to verify the problem you set out to solve was indeed, solved.

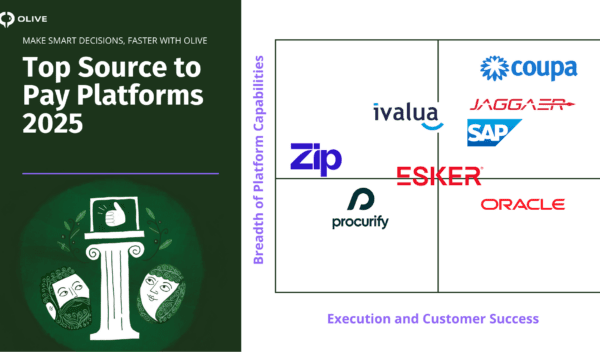

Olive for Software Evaluation Surveys

Olive’s surveys facilitate a seamless collaboration process, helping you easily capture and record feedback from stakeholders across the organization. Save countless hours by avoiding long meetings, stakeholder interviews, and eliciting requirements into spreadsheets. See for yourself; try Olive for 14 days.